Visual Computing Laboratory (VCL) at Connecticut College was founded on August 1, 2014 by Professor James Lee and five student researchers: Danya Alrawi ’16, Raymond Coti ’16, Jamie Drayton ’17, Matt Rothendler ’15, and Will Stoddard ’17. The lab is an interdisciplinary research space dedicated to exploring how computational technologies can expand human creativity, perception, and interaction. Our work focuses on visualization and visual computing, including real-time computer graphics, information visualization, immersive virtual environments, human-computer interaction, user experience design, affective computing, and physical computing. At VCL, undergraduate researchers collaborate closely with faculty to design, build, and study interactive systems that bridge art, technology, and scientific inquiry. Projects often combine software development, hardware prototyping, and creative experimentation to explore new forms of visual communication and digital experience. Below you can find current and past student researchers as well as selected research projects. If you are interested in joining the lab or have ideas that align with our research interests, please feel free to reach out via email.

Research @ Visual Computing Laboratory

Research Students

Todd Shriber

2025

Jingjing Chang

2025

Bazeed Shahzad

2024

Eiman Rana

2023

Robert Jensen

2022

Madison Ford

2022

Tyler Silbey

2022

Szymon Wozniak

2021

Katy Pelletier

2021

Lauren Cerino

2021

Alex Bukovac

2020

Bempa Ashia

2020

Kylie Wilkes

2020

Rishma Mendhekar

2019

Yi Xie

2019

Jessy Quint

2019

Julia Dearden

2019

Roxanne Low

2019

Jaleel Watler

2019

Isaih Porter

2018

Jess Napolitano

2017

Jamie Drayton

2017

Alyssa Klein

2016

Walter Florio

2016

Research Projects

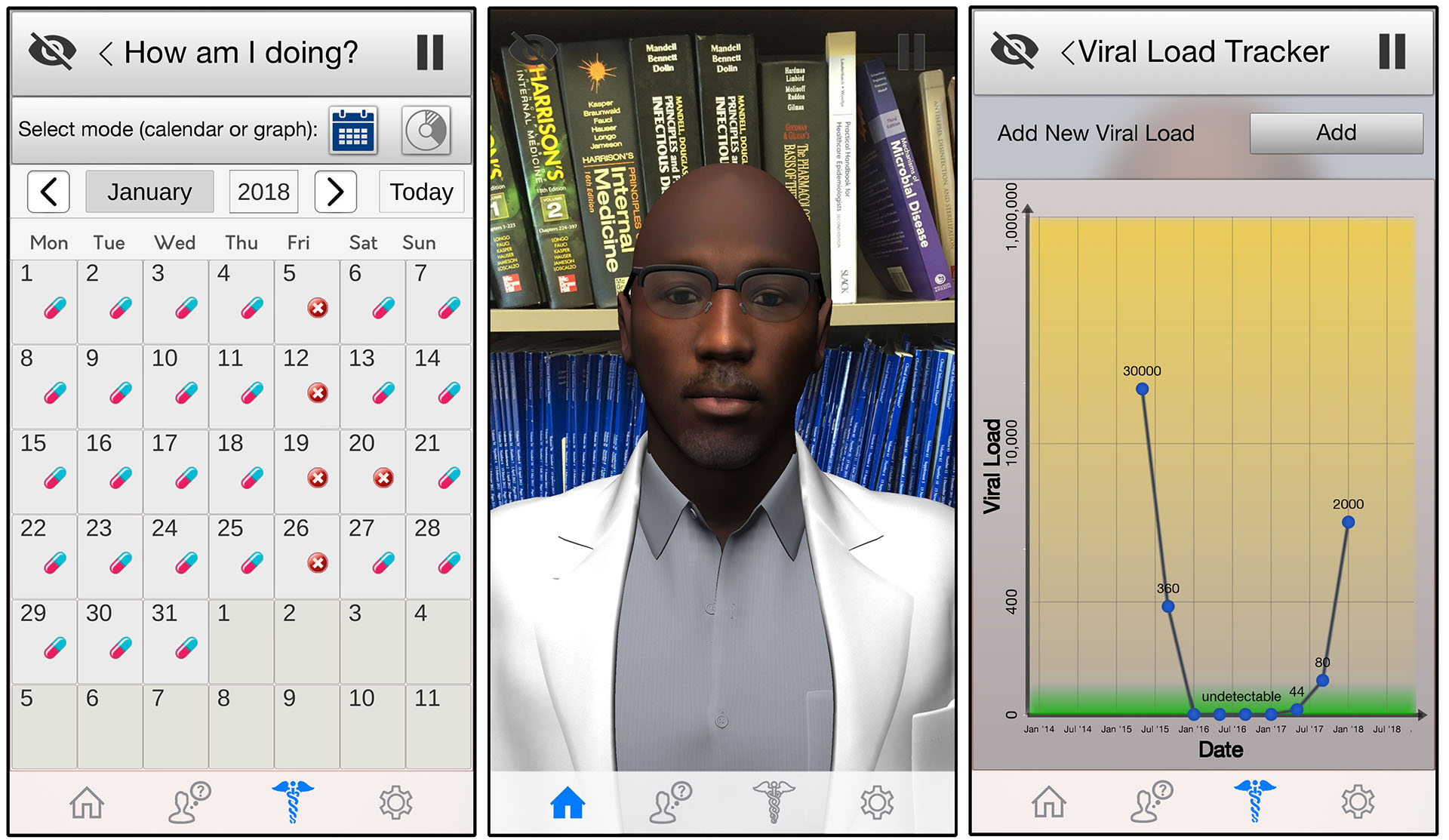

A mobile phone intervention using a relational human talking avatar to promote multiple stages of the HIV Care Continuum in African American MSM

Mark Dworkin and James Lee

NIH funded project (1R01MH116721-01A1, 2019-2023). HIV-positive African American men who have sex with men (AAMSM) have the lowest percentage of retention in care and are less likely to have viral suppression. My Personal Health Guide is an innovative talking relational human Avatar mobile phone application to engage HIV-positive AAMSM in adherence and retention in care. Development of this app was informed by the Information Motivation Behavioral Skills Model. A pilot study demonstrated acceptability, enthusiasm for the app, and preliminary efficacy.

Evolving Video Game NPC Behavior using Generative AI

James Lee, Todd Shriber '25

This research focuses on creating an interactive NPC guide in Elden Ring using the Unity OpenAI API plugin. The NPC dynamically generates personalized responses based on player inputs, such as location, stats, and weapons, and provides real-time guidance. By integrating generative AI, the project explores how advanced NPC behavior can enhance player immersion and adapt to in-game situations, offering a more interactive and lifelike gaming experience.

Real-Time Communication with Metahuman Technology

James Lee, Jingjing Chang '25, Rahil Aggarwal-Wheeler '28

This project aims to develop a Metahuman-based virtual assistant that can engage in real-time, empathetic communication. Two methods were explored: OpenAI's Realtime API for seamless integration, and a combined approach using local Whisper Speech Recognition with GPT and Text-to-Speech services. Both are integrated with NVIDIA's Audio2Face and Unreal Engine for lifelike facial expressions. By addressing challenges such as cultural and linguistic barriers, low literacy, and emotional sensitivity, this project demonstrates the transformative potential of Metahuman technology in communication-critical environments.

Elevators are an extremely useful tool for navigating tall skyscrapers and ensuring disabled members of society are able to get around independently. This research develops a virtual reality (VR) tool to educate people about elevators and simulate the experience of being in one. The tool is immersive and incorporates both visual and auditory sensations that patients encounter while being in an elevator.

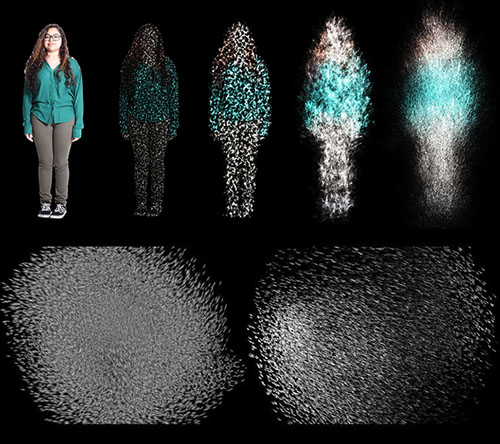

This research examines the increased performance of large-scale particle simulation on the GPU against conventional implementation on the CPU. The current implementation shows around 60 FPS in 4K resolution for about 8 million particles, with a 200x speedup over the CPU version. We deployed this program for the art installation of The Posture Portrait Project at Connecticut College. We also implemented a boid flocking algorithm on the GPU. This work was presented at CCSCNE, April 2018.

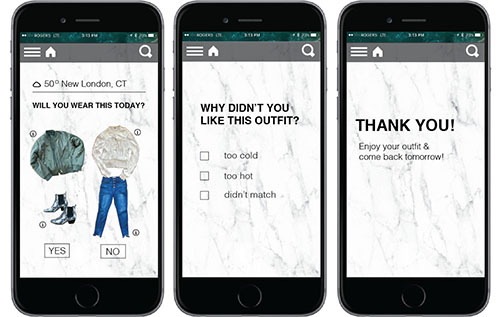

A user-focused application for clothing choices that recommends items from your wardrobe based on weather. A rule-based relationship between weather and clothing was established by surveying 100 people. To create a "smart stylist," we used case-based reasoning with machine learning to learn from user feedback and adapt recommendations. This work was presented at CCSCNE, April 2018.

This research aims to develop a 360 video streaming environment that tracks and analyzes viewers' navigation patterns and gives future viewers access to these data as a viewing guide. We conducted a user study with 34 participants using two different 360 testing videos and concluded meaningful viewing patterns in relation to video genre and elements. This work was presented at CCSCNE, April 2018.

To reduce symptoms of cybersickness under the assumption of sensory conflict theory, we propose overlaying a HUD onto a virtual environment. The HUD provides a stationary element to the visual frame that agrees with the vestibular frame. A study of 9 participants indicated that the HUD reduces symptoms of cybersickness that elicit nausea, with the dynamic HUD reducing more than the minimum HUD.

This research is centered on creating a central repository for archaeological 3D models. Through time, war, and other phenomena, pieces from history have been erased. A repository was developed using Unity incorporating security, speed, ability to change time and location, and seamless infusion of metadata with 3D models. The application is compatible with both desktops and virtual reality.

The goal of this research is to present the accomplishments of Computer Science majors at Connecticut College to prospective students. The system is designed for a 55" interactive display board installed on the 2nd floor of New London Hall. Two Art majors joined the project to assist with usability and graphic design. The system was built in Unity with NGUI and Universal Media Player packages.

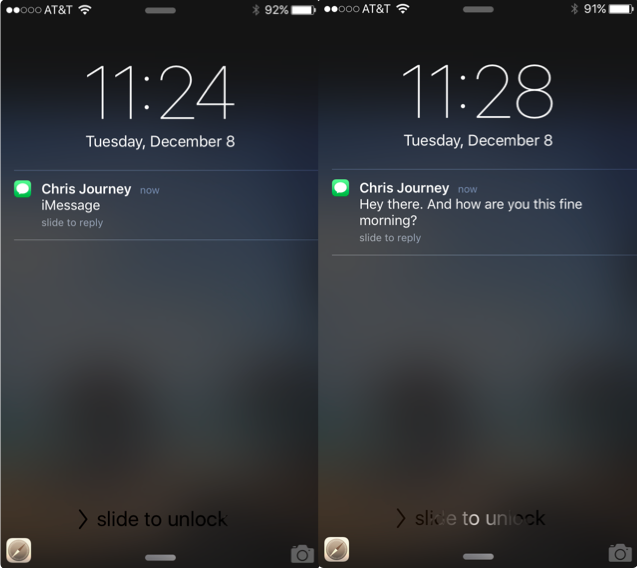

This study aims to better understand how notification presentation impacts a user's desire to switch tasks — specifically whether seeing a preview message is more compelling versus not seeing the message at all. Through observational methods and previous research on our mental and emotional needs for connection, we develop a greater understanding for engaging users with disruptions.

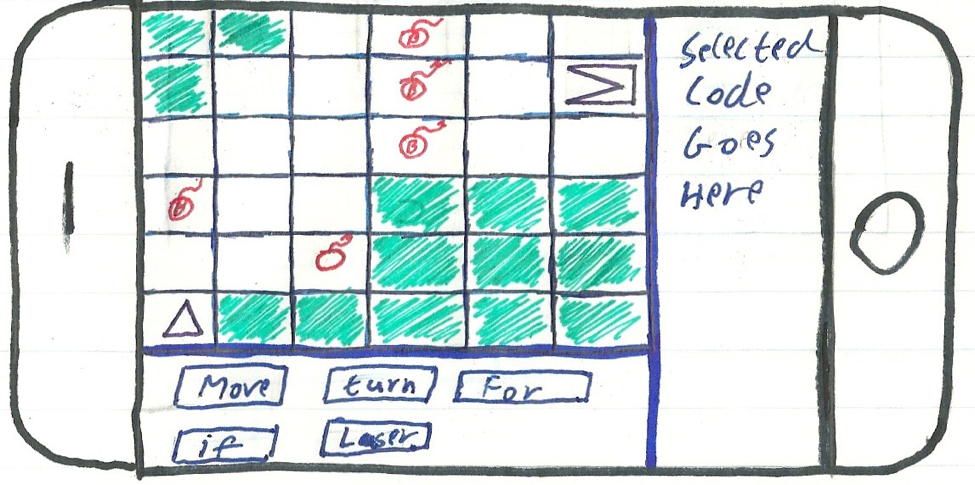

ESAT introduces children to computer science skills through adaptive puzzles. Behind the games, an adaptive system learns how the student learns and targets tips, puzzles, and tutorials to be more personal and efficient. The system focuses on understanding the student's zone of proximal development (ZPD) to gauge learning progress.

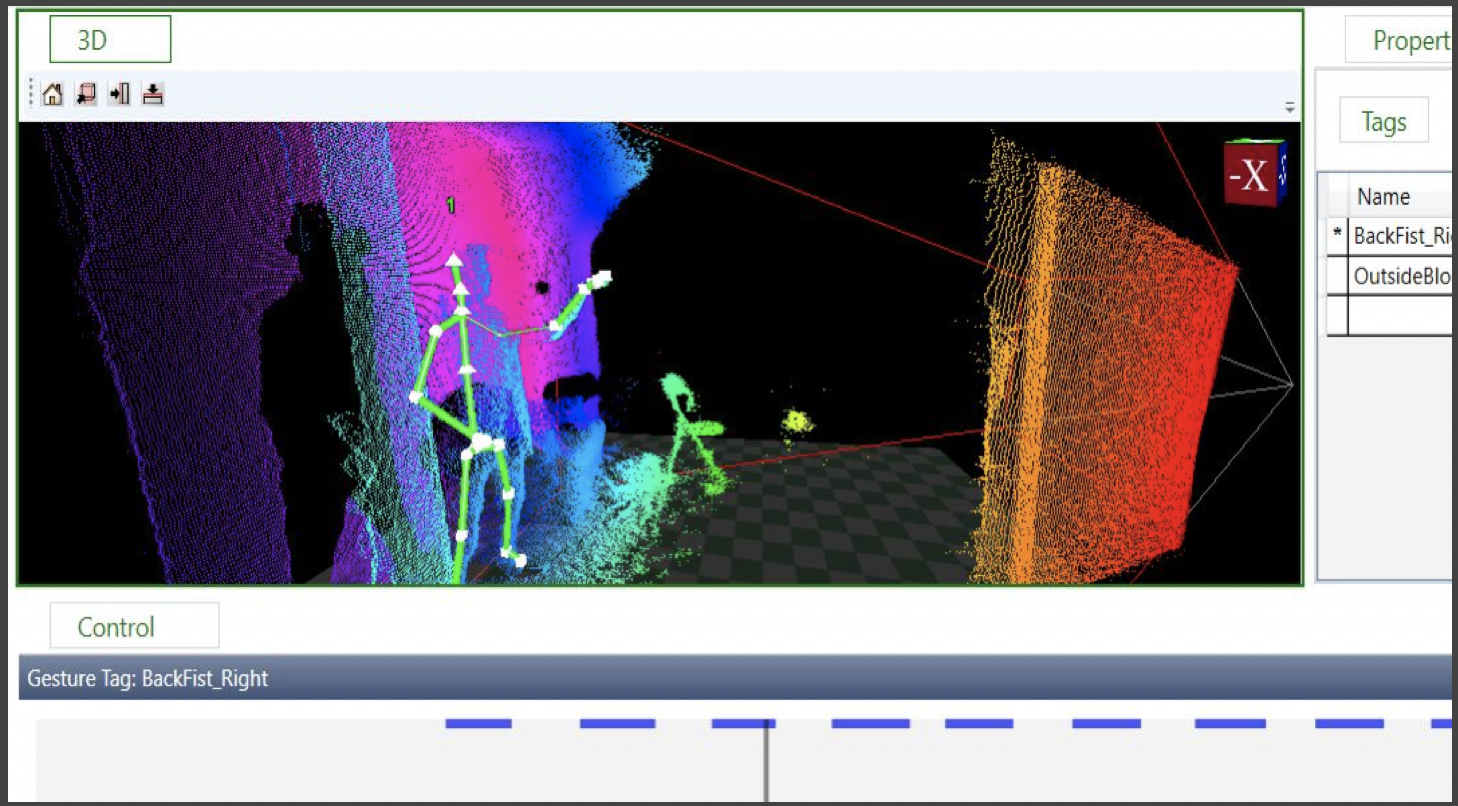

Utilizing the Microsoft Kinect and Unity 3D to create a tool for learning Tae Kwon Do techniques that addresses the issues of expense and expertise in sports analytics. We propose an immersive training program with gesture recognition working in conjunction with real-time joint analysis.

The Virtual Window project uses real-time manipulation of a live video feed using user head-tracking data to provide an interactive experience similar to looking out a window. OpenGL shaders correct for barrel distortion, achieving 100x faster calculation than CPU-based implementation. User positioning uses Kinect v2. This project received Research Matters Awards in January 2015 ($2,000).

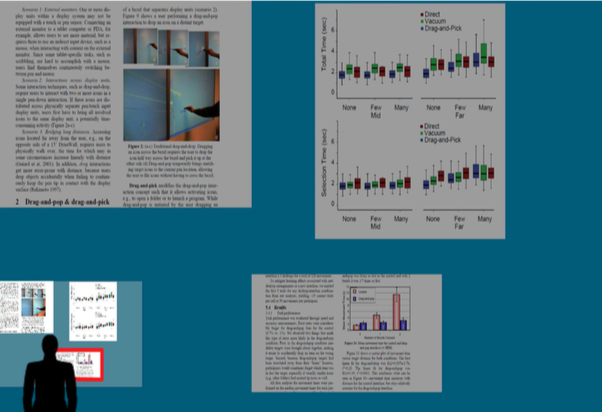

This research addresses the accessibility of open application windows on a large display. We propose a design with user-activated functionality to shrink display content to a comfortable viewing area, allowing users to access, transfer, or remove windows without moving around the room. This work was presented at CCSCNE, April 2015.

This research develops a 3D virtual avatar's ability to communicate and converse with humans. The research aims to expand the previously developed interactive avatar framework's capabilities to support more flexible dialog management, studying the intersection of Human-Computer Interaction and traditional Chatbot models.

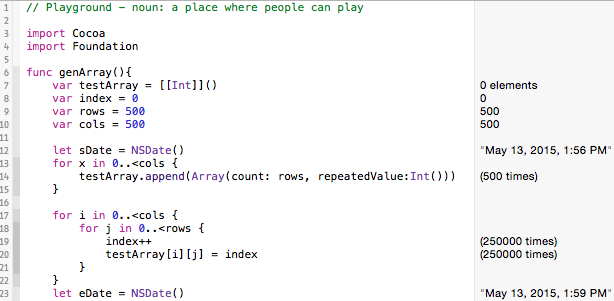

Research on Apple's new Swift language, testing it against other popular programming languages: Python and Java. Philip also hosted a workshop, Swift Bootcamp, for CS major/minor students.