2007 - 2012

University of Illinois at Chicago, Electronic Visualization Laboratory

Role and Framework

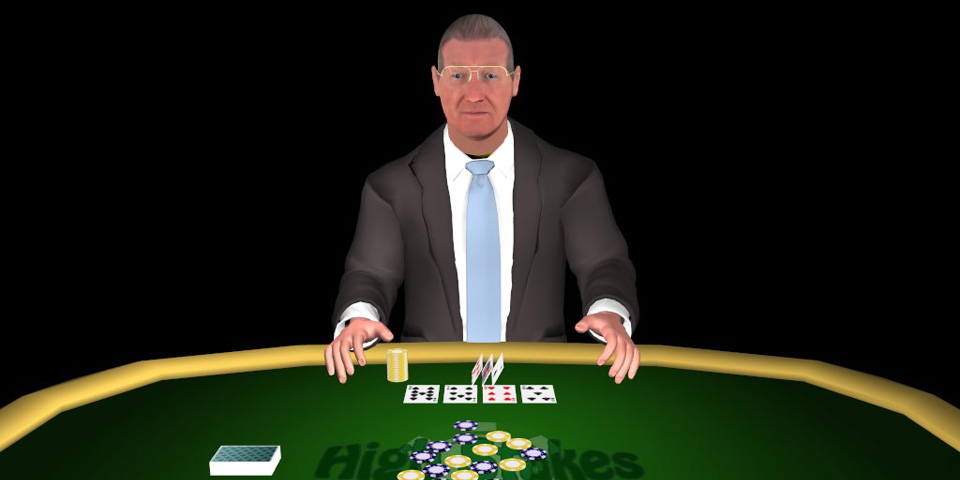

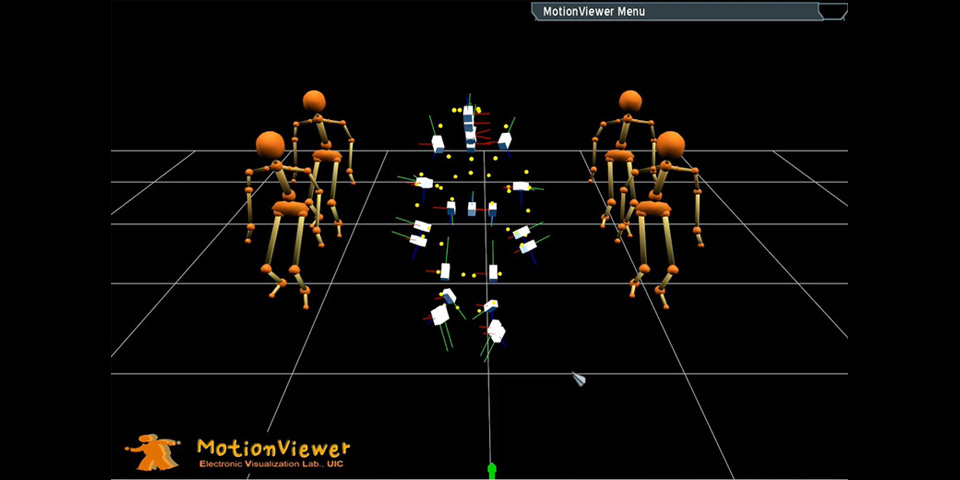

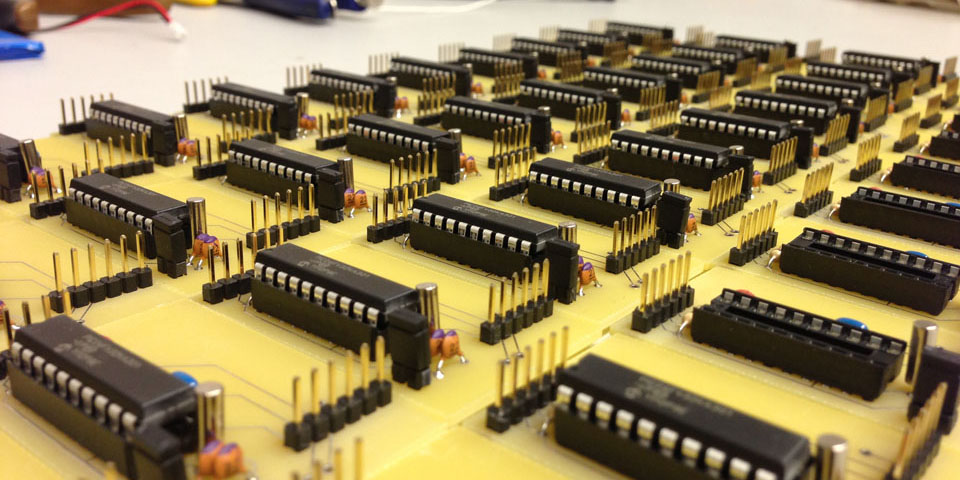

Led all visualization aspects including the visualization framework, character modeling, animation, and expressions for real-time interaction. The main framework was implemented in C++ with the Object-Oriented Rendering Engine (OGRE), with external behavior control via Python scripting (Boost C++ Python binding). Blendshape morphing controlled facial expressions in real-time, and various custom GPU shaders were implemented to enhance visual quality. Character modeling, texturing, and rigging was done in Maya, with animation acquired via Vicon Motion Capture system and MotionBuilder software.

Project Video Documentation

Project Video Documentation

FFT Lip-Sync

While developing an interactive virtual human as a computer interface, verbal communication is used primarily as we do in everyday communication. For this purpose, there are two possible methods available. One is to use a computer synthesized voice (Text-To-Speech) and pre-recorded voice. In the case of TTS, we can utilize phoneme & viseme information from the TTS engine to drive character lip animation. For the recorded voice, there is no such info coupled with the wave file. An alternative is to analyze waveform on the fly to extract certain characteristics of human voice and match lip motion. There are several distinct frequency bands tied to some of the dominant lip movement. Therefore, an FFT analyzer was implemented using the BASS audio library to use frequency band and its power (level) to manipulate lip motion.

FFT Lip-Sync

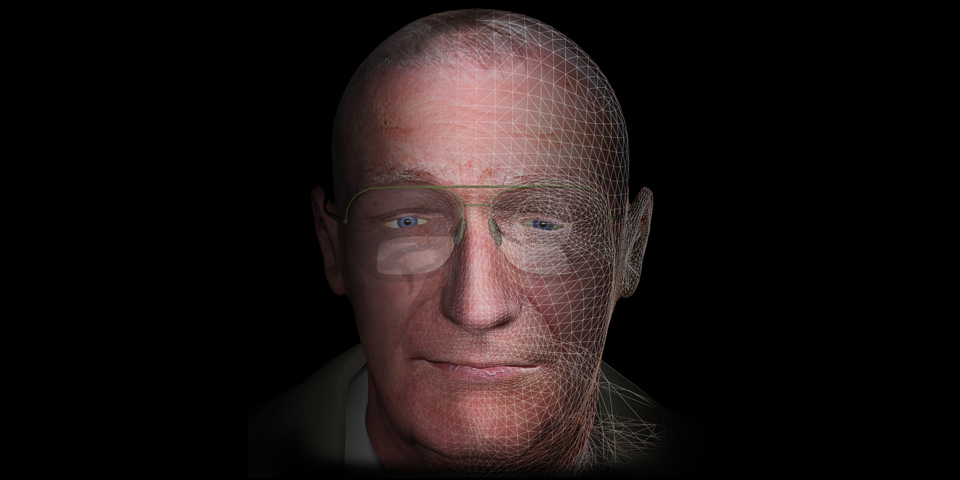

Reflective Material

The first target subject to digitize in a virtual world was a very old senior wearing glasses. Glass itself has a very interesting element in graphics — it's reflective. At the very beginning of this project, simple semi-transparent material was used for glasses, however it was not as fun as real-life physical features. The rendering approach was elaborated with physical shape considerations: slight curvature on the surface for simple refraction, multi-texturing techniques to mimic dirt and specs on glasses, and finally reflecting the real world into virtual space. A webcam video streaming module was implemented for dynamic texture update of the glass material. This became one of the favorite features recognized from many users. "Oh, I can see me there, on glasses!!!" Yes, it helps to better engage a user in interaction with a virtual character.

Reflective Material

Face Tracking

When we think about daily communication — face-to-face communication — it is important to realize much more profound nature of human behavior. One such characteristic is eye contact or eye gaze. Since a large display such as a 55" screen is used to represent a life-size virtual human, a user experiences pretty much the same level of interaction as with a real person. While a user moves around or shows hand gestures, the character should show some attention to that. Facial feature and movement detection was implemented with OpenCV library and the webcam video module. Multi-threading was used for video analysis so that frame rate for real-time renderings is not sacrificed on a multi-core machine. The developed module supports multi-face detection and grid-based movement detection. The face location feeds into character behavior routine to drive eye gaze in normal mode. Detected movement grid serves as area of interest to supplement temporary eye gaze variation, which assumes a user points to some direction or wants to show some kind of gesture. The character naturally corresponds to such auxiliary information.

Face Tracking

HD Video Player Integration

The LifeLike research framework is considered as a rich environment to interact with and show various information to a user. In the early stage, a web interface was made to the main rendering framework to show various textual information and online videos. However, control over the web-rendering module was not sufficient — for instance, detecting start/end of YouTube video playback or interrupting it in the middle. A dynamic video texturing interface was designed and implemented using the Theora video library to extract video frame data and feed it to the dynamic material manager in the rendering module. All video playback was multi-threaded to not interrupt the fast rendering loop.

HD Video Player Integration

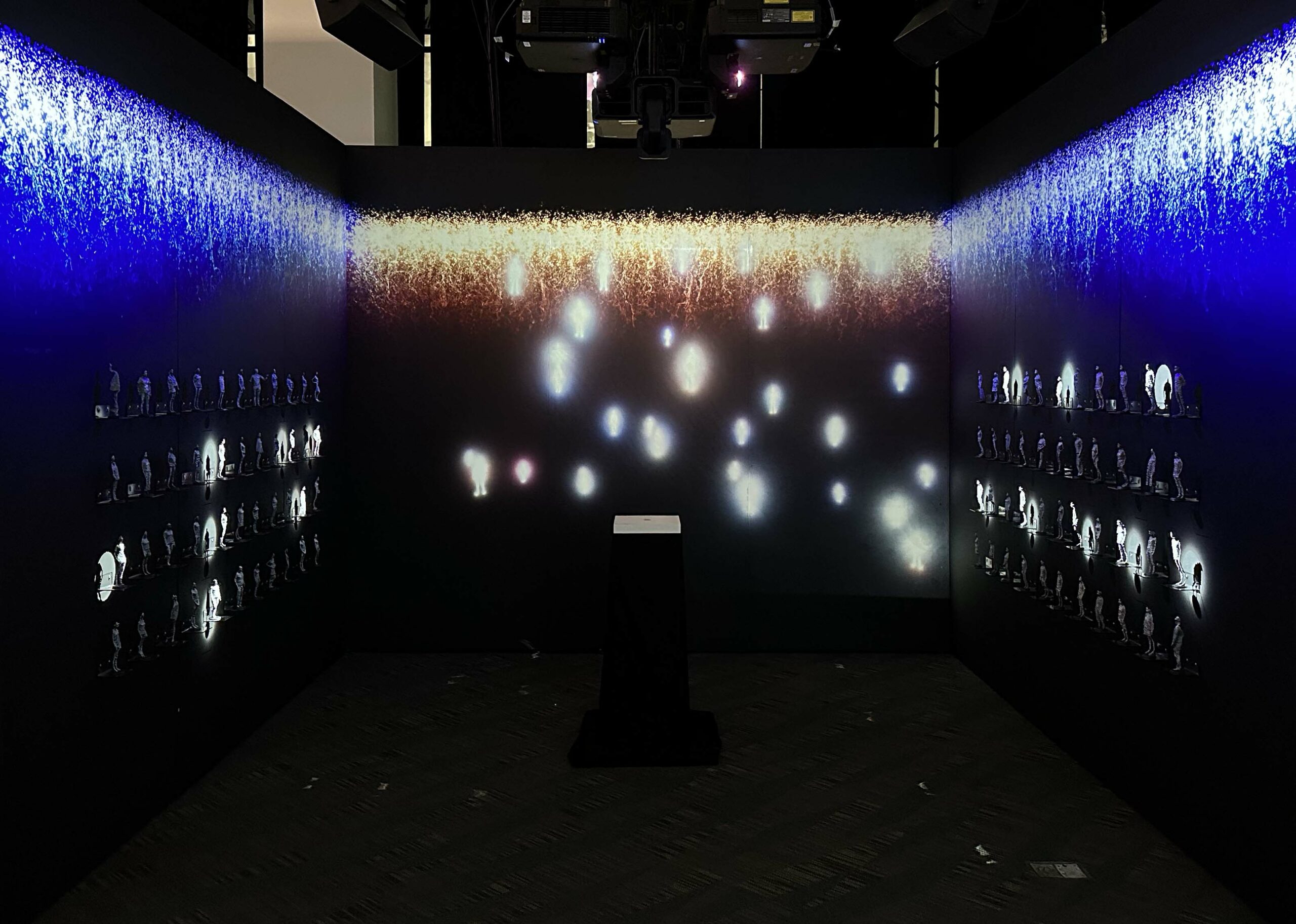

Astronaut App on Large Display Wall

One of the nicest things being in a leading research institution is lots of leading-edge resources available. One facility is a large-scale display wall, so-called cyber-commons, used for various activities such as new classroom environments, remote collaborations, and many other types of research projects. The Adler Planetarium is one of the lab's good collaborators and has a similar display system running high-resolution nebular image viewer for visitors. The idea was to have a virtual tour guide for the galaxy. Different from conventional museum installations, this system is interactive. A user can control the image viewer and see very high-resolution details, however, we generally do not have a good sense of what this image is since we are not experts in this domain. The virtual character helps explain images and guide through the much deeper universe. A user can talk to an astronaut tour guide and ask questions regarding images on the display. The framework is capable of understanding user speech (speech recognition) and responds via synthesized voice from written rich knowledge. The system runs along with external image viewer and contextualizes information based on the current view of the image on the screen.

Astronaut App on Large Display Wall

Hair Simulation on GPU

Another interesting graphical feature developed was a simple hair simulation on GPU. When making a female character for another deployment, one thing that was bothersome was her hair. Female characters generally have longer hair and looking at the rigid motion of such soft part of the model does not give a realistic feeling at first glance. Instead of running full hair simulation — not suitable for a real-time interactive application — a very simple bounce back model was implemented without calculating real-world spring model. While external force is detected (i.e. wind or head movement), vertices on the hair mesh model change position slightly in the same direction as the force. Imagine holding a long string and moving your hand around — the root follows your finger position immediately while the other end slowly follows due to air friction and time to transfer the force. Such varied motion characteristics are implemented by utilizing UV texture coordinates for the hair patch model. Vertices around the root of hair strings have higher V value and move quickly; the end of the string is the opposite case. All simulation is implemented on GPU so there is no worry for performance.

Hair Simulation on GPU